Did you know that customers are over 255% more frustrated now than in pre-pandemic times? In such circumstances, even a tiny support mishap can drive a customer away.

Multiply that mishap by the number of support reps on your team, calculate mistakes on a monthly basis, and you can do the revenue math.

Customer service quality is undeniably tied to customer retention and acquisition. It’s about time you design a robust customer service quality assurance (QA) program to catch those mistakes.

What is a quality assurance plan for customer service?

Customer service quality assurance programs revolve around monitoring the quality of customer interactions.

Based on a quality assurance scorecard, reviewers rate customer interactions against set quality standards and provide feedback to improve (and maintain) support quality.

Sounds like a complex process? Don’t worry — this guide will help you learn the best practices and tools to make quality an easy, everyday activity for your team.

What are the basic components of a customer support QA program?

- Set your customer service goals.

- Decide who will review customer conversations.

- Decide which conversations to review.

- Create a QA scorecard.

- Communicate the new quality assurance program to the customer service team.

- Plan calibration sessions.

- Measure & improve your quality assurance process.

When support goals are your starting point and personal feedback is the finish line, your customer service QA program is airtight. A thorough quality assurance plan sets your team up for success.

Set your customer service goals

Before you rush into analyzing your contact center interactions, you should have a clear vision of what you want your customer service to look like. In other words, define what “quality” means for your team.

All companies have a unique concept of what matters most for their business and customers. Some focus on delivering personalized assistance to drive product engagement and upsell their products — while others might prefer to keep their interactions short and speedy.

These questions will help you define your support vision and goals:

- What do we do? Your vision can range from offering almost no support (like, you know, how customer service works at Facebook) to going the extra mile and making sure that your support team drives customer loyalty & revenue.

- Whom do we serve? Some companies help all customers equally, while others focus only on paying or premium ones.

- How do we serve them? From customer service teams who focus mostly on providing self-help to companies aiming for support-driven growth, decide what’s the best option for your business.

Once you know what you want your customer service to be like, it’s easier to break the journey down to attainable goals. When you’ve finished setting up your support goals, come back to your vision to check how well your goals align with it.

Customer service goals examples:

- Improving the quality of customer service by pushing both IQS and CSAT above 90% by the end of the year.

- Respond to all tickets and keep First Response Times under 2 hours on a monthly basis.

- Maintain an even level of support via phone and live chat by keeping IQS over 90% across channels at all times.

- Upsell products in every interaction possible, scoring at least 80% in the “Upselling products” rating category in conversation reviews.

Ultimately, teams that set goals improve faster than teams that don’t. Customer service goals will help you bring your customer service experience to the next level.

✨ Read more about setting the right goals for your customer support team →

Decide who will review customer service conversations

When choosing your QA reviewers, you have four options:

- Manager reviews

- Peer reviews

- Self-reviews

- Specialist reviews

They each have their advantages and disadvantages, and some teams incorporate a combination of several (or all!) in their customer service QA program.

In most cases, managers are responsible for providing feedback to customer service agents and, thus, also do support QA. However, more and more teams are hiring quality assurance specialists to avoid overburdening the managers and to dedicate more time to conversation reviews. 50% of teams who do not have dedicated quality professionals plan to hire for these roles in 2023.

The form that suits your company best depends on your aim, available resources, team setup, and ticket volume. If you’re a small team, we recommend a combination of self-reviews and peer reviews. Large or growing teams should consider hiring a QA specialist or allocating enough time for team leads/managers to review conversations.

✨ Read more about choosing reviewers for your QA program →

Decide which conversations to review

Depending on the ticket volume, you can review all your customer conversations, or target random samples of customer interactions.

However, the best strategy focuses on longer interactions, more complex ones, or conversations with a low customer satisfaction score (CSAT) — especially when you have a quality management solution at your disposal.

In fact, based on our Customer Service Benchmark Report 2023:

- 37% of teams review conversations containing certain keywords or topics;

- 35% of teams review conversations with poor performance;

- 32% of teams use AI to help them select the most useful conversations to review.

Speaking of, Spotlight feature of Zendesk QA (formerly Klaus) automatically picks out the more lengthy, complex conversations – as these are proven to contain the highest potential for learning.

✨ Read more about conversation reviews →

Create a QA scorecard

Not to put any pressure here, but the customer service QA scorecard is the foundation of your quality assurance (QA) program. You will use it to rate how well your team’s support interactions meet your internal customer service standards.

As the goal of customer service reviews is to track how well your team is performing against your internal quality control standards, there isn’t a single scorecard that suits all companies.

Here’s what to keep in mind when creating your customer service QA scorecard:

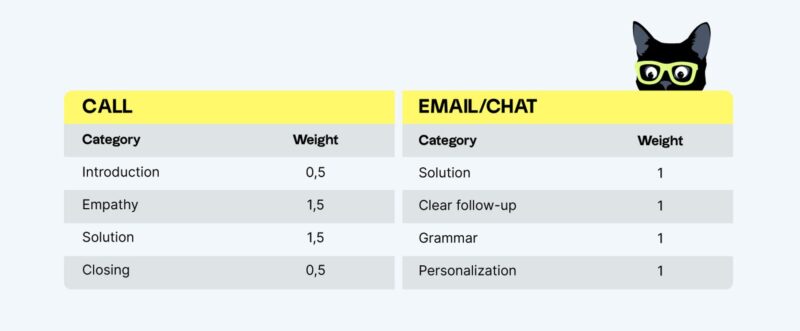

- Use rating categories that reflect your support goals and standards. Make sure that each of your quality criteria is represented by at least one rating category in your scorecard.

- Less (rating categories) is more (reviews). Keep your scorecard short and simple. The more rating categories you have and the more complex the scorecard gets, the less likely you are to actually do regular support QA. Using 3 to 5 rating categories is a common practice.

- Prioritize your rating categories. Your scorecard likely consists of aspects of different importance. Add more weight to those that matter the most and mark some categories critical – meaning that failing in this aspect would mark the entire ticket as failed.

- Choose the rating scale that meets your needs. From 2 points to 11 points (and more), your choice of rating scales can affect your conversation review rates. The larger the scale, the more precise the results – but, at the same time, the more complex assessments will become for the reviewers. Read about the pros and cons of different rating scales and choose wisely!

Looking for some inspiration? According to Customer Service Quality Benchmark Report 2023, the most popular categories used by customer service professionals are:

- Solution

- Grammar

- Tone

- Empathy

- Personalization

- Following internal processes

- Going the extra mile

Interestingly the average number of rating categories on a scorecard is 14 (although the median is a far more reasonable 8).

If you’re setting up conversation reviews for the first time, you can start with 2-4 basic rating categories and iterate as you go. Your quality standards may change over time, so it’s best to keep your rubric flexible.

Need help? Read more about QA scorecards or download the template below:

Communicate the new QA program to your customer service team

Most people don’t feel comfortable with the thought of being graded, which is what “support quality assurance” and “conversation reviews” can sound like. How you communicate the process to your team plays an important role in how well it is received.

Here are the three main guidelines for making conversation reviews feel less intimidating:

- Explain the reasons for doing conversation reviews. If your agents are on board with your customer service goals, they’ll understand that internal reviews will help them achieve those objectives.

- Describe what agents will gain from this. Conversation reviews give a nice boost to agents’ professional development and help them advance their careers.

- Use the right feedback techniques to make internal evaluations more constructive and emphatic. Conversation reviews only work if you really do them and if you provide honest and helpful feedback. You might find this customer service one-on-one meeting template useful, too.

Plan calibration sessions

With your quality assurance process well underway, calibration sessions will soon become a must.

Support reps should receive the same quality of feedback regardless of who reviewed their customer interactions. QA process calibration helps reviewers synchronize their assessments, provide consistent feedback to support reps, and eliminate bias from quality ratings.

Usually, the aspects that require quality assurance calibration are:

- Rating scale to check whether all reviewers understand the different ratings in the same manner. The larger the rating scale, the more important the calibration.

- Failed vs non-applicable cases — when a ticket was handled correctly but a specific aspect of the conversation was missing, reviewers might rate it differently.

- Free-form feedback AKA additional comments left to support reps, which are tricky to calibrate. Nonetheless, reviewers should agree on the “length” of feedback included in each review, feedback techniques used in the comments, and the overall style and tone.

✨ Read more about support QA calibration sessions →

Measure & improve your quality assurance process

What you do with the results of your quality assurance plan is as important as doing conversation reviews in the first place.

Track your team’s key performance indicators over time, schedule calibration sessions, discuss the progress in team meetings, and provide individual feedback in regular one-on-ones.

Customer service quality reviews offer insight into improving interactions and making customers happier. Oftentimes, these are quick and easy things to fix.

Keep in mind that your QA plan doesn’t have to be set in stone. Tweak your rating categories, weights, or scales if needed, but remember to always inform your team about any changes that you make. Even the smallest adjustments are likely to impact their work.

What is an example of QA program?

All companies have their way of making sure they deliver great customer service. Basically, each support team creates its own customer service quality assurance program based on different QA scorecards and various types of conversation reviews.

If you’re not sure how to set up your quality assurance program, check out how other companies have done it. Here are some examples of how companies handle customer service quality assurance:

- Pipedrive evolved its customer support quality assurance program to increase CSAT & IQS;

- Glovo maintains high-quality support across BPOs and borders;

- Wistia conducts regular peer reviews in groups of three to include a third-party facilitator in all feedback sessions;

- Geckoboard uses agent feedback to increase proactive help to drive product engagement and upsells;

- Agorapulse involves the entire support team in the process of building their quality scorecard and standards.

To recap, here’s a checklist for your customer support QA program

- Set your customer service goals.

- Decide who will review customer conversations.

- Decide which conversations to review.

- Create a QA scorecard.

- Communicate the new QA program to the customer support team.

- Plan calibration sessions.

- Measure & improve your quality assurance process.

That’s it, your quality assurance plan is ready!

Originally published in April 2023; last updated in December 2023.