Customer conversations come in all shapes and sizes. How are you supposed to create a single QA scorecard that covers all the different possibilities?

It’s no surprise that getting started on building a QA scorecard can feel like an uphill battle. Add on the fact that most support managers are running around like cats chasing laser pointers (but doing far more meaningful work), and it’s easy to put off creating a scorecard indefinitely.

But that’s not a good choice for your team, your customers, or your business.

Jump straight to QA scorecard examples →

Why you need a quality monitoring scorecard

30% of customer support leaders rate measuring and improving support quality as a significant pain point.

Worse, the average Customer Satisfaction Score (CSAT) response rate is only 19% for chat, and a measly 5% for email and phone support. That means you can’t rely on customer feedback as a reliable method to understand how well your team is doing.

Enter the internal quality assurance scorecard, stage left. (Soft applause.)

QA scorecards (often known as quality assurance or quality monitoring scorecards) are critical tools in a support team’s belt. When created with thoughtfulness and with a focus on enabling empathetic and effective support, a QA scorecard can be an invaluable asset.

Scorecards are so valuable because they give your team a shared language to describe what makes a customer interaction magical. When things don’t go so well, they facilitate conversations around improvement. And when you’re creating an effective QA scorecard, it also serves as a forcing function that makes your team stop and consider what matters most to your customers.

Below, you’ll find a collection of real-life scorecard examples from customer experience leaders. Hopefully, they’ll help you kickstart your own QA scorecard creation or evolution!

Different types of quality assurance scorecards

Different support channels bring different customer expectations. And that means you’ll need to tailor your QA scorecards accordingly. While it’s possible to create a jack-of-all-trades scorecard that you can use across channels, a better approach is usually to create different scorecards that reflect each support channel’s distinct characteristics.

To start, let’s talk about what aspects to consider when creating a rubric for your different support channels.

QA scorecard for email support

Email is an asynchronous and long-form support channel.

Because of this, good email QA scorecards place extra emphasis on qualities like thoroughness and grammar. They also look for things like anticipating customer needs — because if it takes hours for replies to come through, every time you can anticipate and proactively answer a question, you’re creating a better customer experience.

Chat quality monitoring scorecard

Chat customer service is fast-paced. It’s often more casual than email or phone support, and it requires support professionals to be fluid and multi-task. It’s not unusual for teammates to juggle 3 or 4 chat conversations at once.

That means your chat scorecard should reflect things like quick responses to ensure customers aren’t left hanging with long awkward pauses. You may also want to include items around matching the customer’s tone or embodying your brand’s preferred voice.

Phone quality assurance scorecard

Great phone QA scorecards emphasize the complex aspects of spoken communication. This includes things like pacing, volume, and active listening, in addition to the core elements your team considers most important across the board.

Providing phone support is often more emotionally and mentally demanding than providing chat or email support, so you’ll want to make sure you’re assessing your call center performance in light of those challenges.

Want more tips on what makes a great QA scorecard? Check out our guide to creating customer service scorecards →

Real-life quality scorecard examples

Replo’s QA scorecard example for chat & email

As Head of Support at Replo, I had the opportunity to create our team’s first quality monitoring scorecard.

It reflects the challenge of our primary channel: Zendesk Messaging. Messaging combines synchronous chat and asynchronous email communication into one stream for customers.

We crafted our QA scorecard, inspired by resources like the Customer Service Quality blog to reflect that reality.

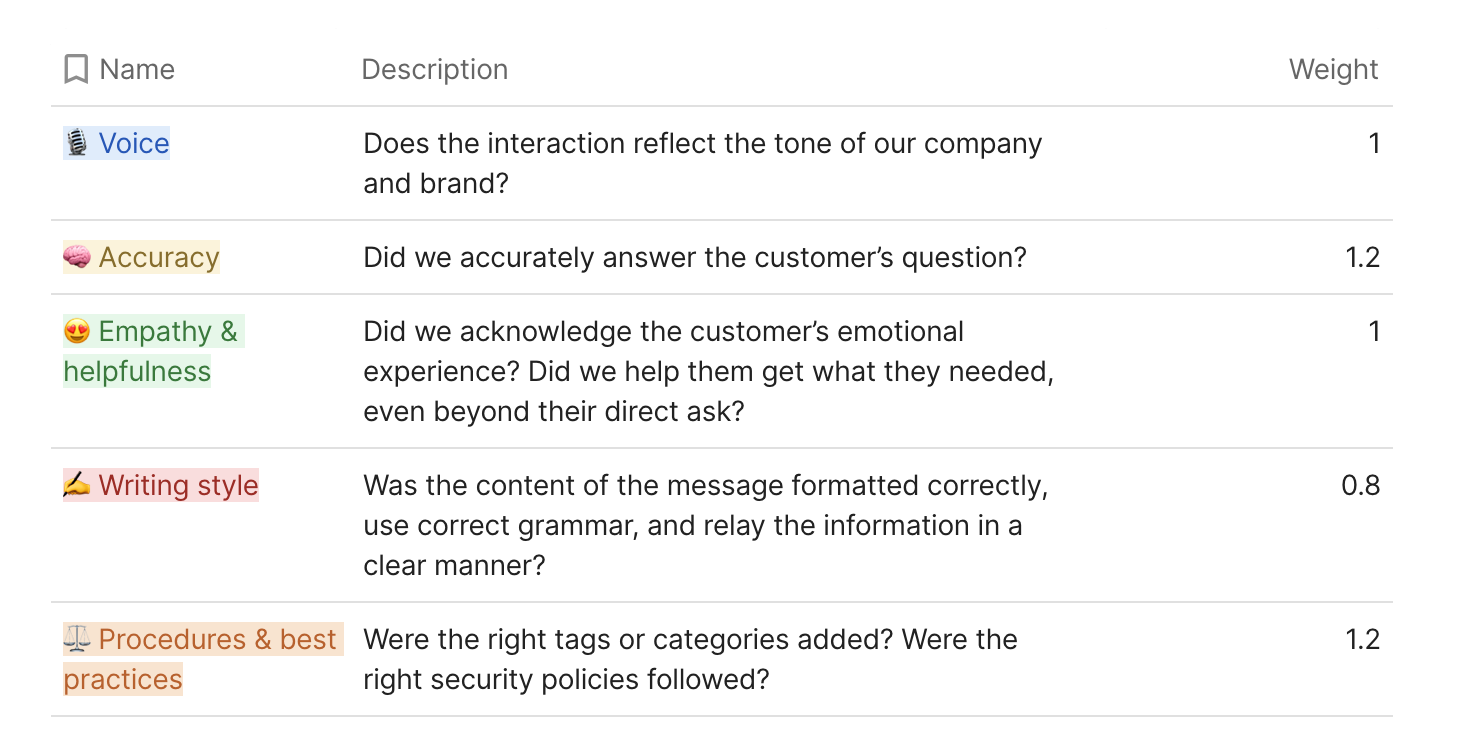

At the highest level, our QA focuses on five major themes that reflect the qualities we want to see in our customer conversations. Each thematic category is weighted, giving us the ability to fine-tune how these values add up to a score for our team.

Pro tip: Weighting scores can give you the ability to emphasize certain aspects of your team’s interactions at different times. If you find your team’s adherence to procedures is behind the curve, you can increase its weight for a time to make it a priority in future reviews.

Beneath each major theme, there are a series of elements that give clarity to the overarching meaning of the category. Instead of a “required” list of elements, these items are meant to indicate the generic building blocks of great customer interactions:

- Voice

- Uses a friendly tone (but matches the customer’s level of formality)

- Correctly names product features

- Explains technical concepts at the right level for individual customer understanding

- Accuracy

- Applies critical thinking to identify and respond to all parts of the question, unstated or stated

- Provides the correct answer to the question

- Demonstrates product knowledge

- Greets the customer with their correct name

- Empathy & helpfulness

- Understands & acknowledges the customer’s emotions

- Identifies the customer’s underlying goal, even if unstated

- Asks clarifying questions and explains the purpose of those questions

- Avoids accusatory or negative language

- Writing style

- Adapts prepared responses to the specific customer where appropriate

- Effectively uses bullets and spacing to enhance redability

- Uses images or videos when they will serve as better answers

- Procedures & best practices

- Selects appropriate tags/categories for the conversation

- Correctly assigns the right group, if needed

- Follows internal policies where appropriate (e.g. refunds, escalations)

- Leaves helpful and clear internal notes

- Applies defined troubleshooting techniques

Calculating the score

Each theme is given a score between 1 and 3, and then an overall quality score is calculated based on the theme’s score and its weight.

- 1 = needs improvement

- 2 = satisfactory

- 3 = excellent

- In our reviews we emphasize that a 2 is a good score, leaving us space to recognize excellence with a 3! Leaving space for excellence gives you the opportunity to reward someone for going above and beyond in a specific category.

Note: If you’re trying to figure out the right scoring system for your QA scorecard, check out this guide to rating scales →

Each conversation review also includes an open space for feedback to expand upon scores, celebrate improvement, or offer words of encouragement.

Total Conversation Score = (Sum of (Each Category Score x Weight)) / 5

Our team is small, and we do QA reviews every two weeks. These scores are used, along with thorough notes, to engage in coaching conversations during one-on-ones.

For larger teams, more narrowly defined categories can help speed up the quality management process.

Fintech email scorecard

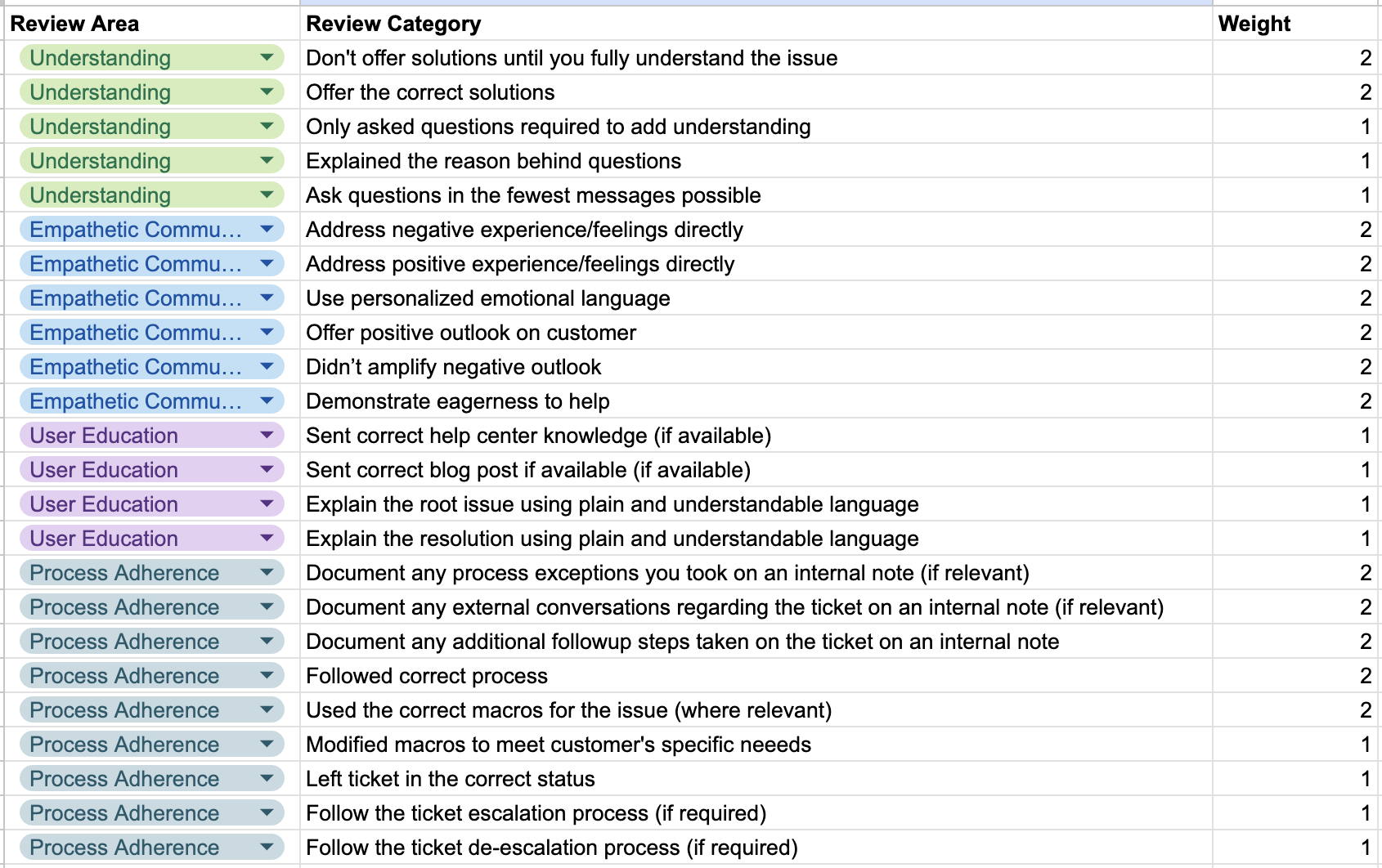

This QA scorecard example comes from an email-only support team in the fintech space (who preferred to remain anonymous).

It focuses more heavily on elements that underline the strengths of the email as a channel and shines a light on areas in which email support can fall short.

Since it’s meant for a company in the financial sector, this quality assurance scorecard also includes specific elements about compliance and process adherence. Any company operating in a regulated space can find value in items like this for their own QA scorecards.

In particular, they highlight the value of asking the right questions and giving customers critical context. With the time gap between email responses, it’s easy to leave a customer in limbo for days—like playing an excruciating game of telephone.

Customers are best able to answer your questions when they have context about the ‘why’ behind a question. This scorecard stresses that value to the team.

Calculating the score

This scorecard uses a binary 1 or 0 for each individual category (reflected by a check or no check in the scorecard.) Scores across all categories are averaged — both within their area to give an area score and in the aggregate to give a total score to report back to agents.

Review Category Score x Weight = Individual Score

Individual Scores Sum / Total Individual Scores = Ticket Score

This team provides feedback on a monthly basis, and the depth of their scorecard reflects it. By measuring both an individual score for each area and an overall score, teammates can focus on specific areas where they need to improve.

This methodical approach can be a good fit for customer service teams primarily focused on providing email support.

E-commerce chat scorecard

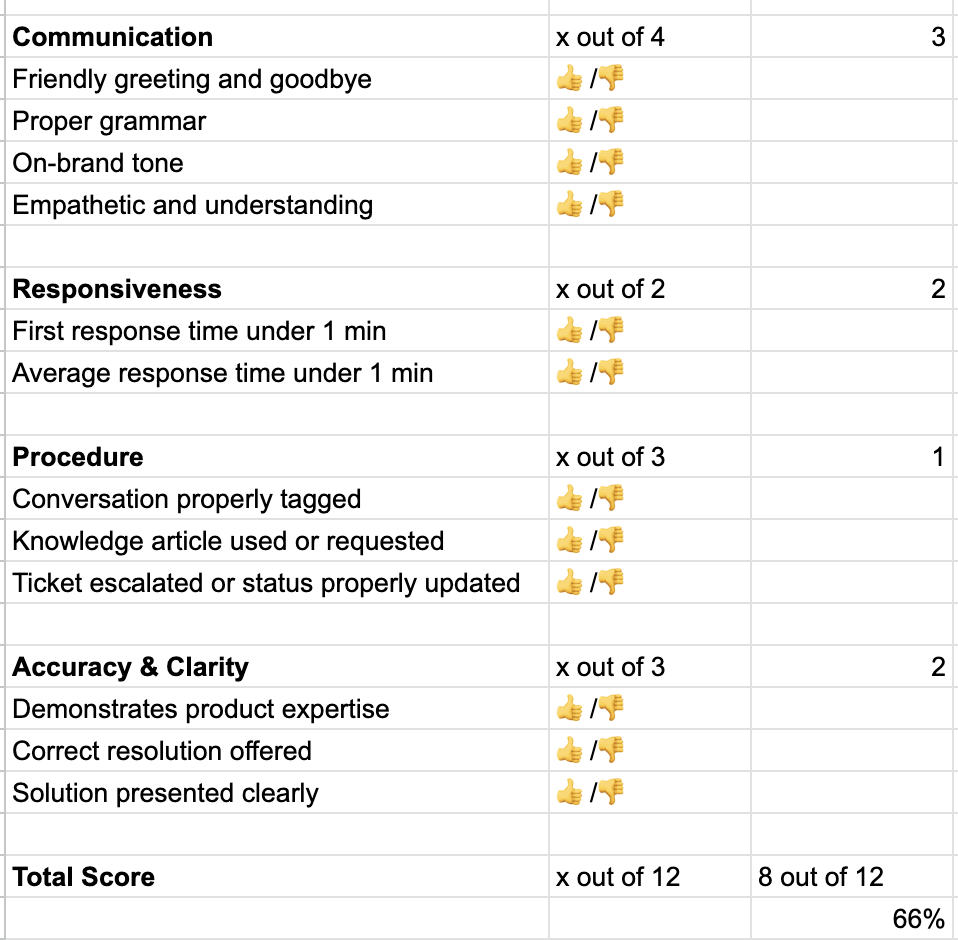

This QA scorecard comes from a large team in the e-commerce industry (who also wanted to remain anonymous). This company works with both an internal team and a business process outsourcing (BPO) partner to manage its support volume.

The lightning-quick pace of chat and the high expectations of customers for quick help are reflected in the elevation of Responsiveness to its own category.

As a high-volume team, they made a decision to focus on a simplified scorecard. This allows them to score large amounts of conversations very quickly — and also means teammates benefit from lots of feedback from conversation reviews every week.

The simplicity of this QA scorecard also makes it easy to communicate to BPO partners across the world and cultural boundaries, which is important for distributed teams.

Tickets are rated in 4 categories — communication, responsiveness, procedure, and accuracy/clarity. Those categories are broken into attributes that are given either a binary thumbs up or thumbs down for each ticket reviewed.

Calculating the score

Each attribute with a thumbs-up counts as 1 point, giving a possible 12 points total. Scores on this team are expressed as a percentage, with agents aiming for a 70% or higher rating month to month.

Total Score = (Points Achieved / 12) * 100

Scaffolding QA scorecard for any customer service team

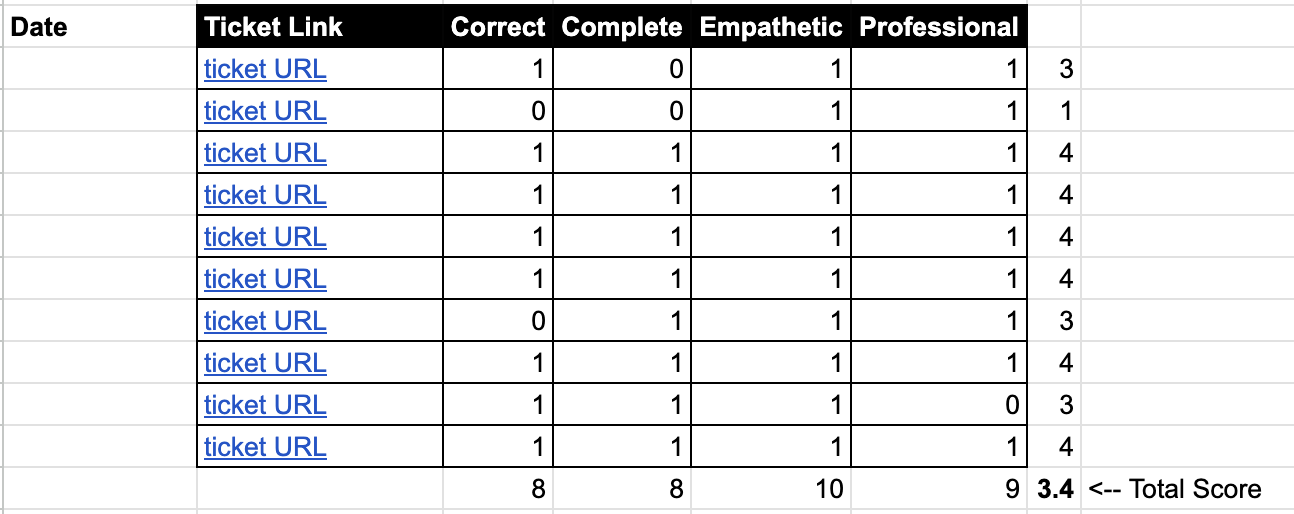

Sahra Mackley, customer support operations expert at Erlang Sea, has helped many teams get their start in formalizing a quality assurance process.

She’s shared a starter QA scorecard, which is a tool she’s worked with in the past as a “scaffold” for customer service teams to build QA processes on top of.

This scorecard example emphasizes providing feedback on a large number of support interactions across the core elements of a good customer conversation: correctness, completeness, empathy, and professionalism.

This method benefits from being straightforward and concise.

For teams who want to start reviewing tickets ASAP (and expand upon the process later), this approach gives a tried and true foundation.

Calculating the score

Scores in this method focus on a bucket of interactions, potentially given on a weekly or monthly basis. Each category earns either a 1 or 0, which then gets summed up into a ticket score.

Ticket scores are averaged out to produce a total average score for each agent during the review period.

Ticket Score = Sum of Category Scores

Total Score = Sum of Ticket Scores / Number of Reviews

Making your own QA scorecard

As you can see from the above QA scorecard examples, they truly do come in all shapes and sizes.

Your team’s QA scorecard will reflect the unique culture of your organization and the individual humans who constitute it. Your industry will also influence it, your current challenges, and your customers’ expectations.

While the above examples offer guidance, they don’t speak to a universal ideal of quality standards. They should inspire you to get creative with your QA scorecard, rather than making you feel like you need to fit your QA program into a specific bucket.

As you’re getting started with building your scorecard, remember that great scorecards start with conversations. Talk to members of your leadership team about the company values. Engage your support teammates on what they think makes your customer experience special. Review feedback you’ve received from customers.

Use this information to define what matters most to your team, then build a scorecard that reflects those priorities.

Using software for customer service QA scorecards

Excel or Google Sheets usually work for small teams with low conversation volumes. The issue is, that with large support teams, they can get messy really fast.

We might be a little biased, but we recommend using quality management solutions like Zendesk QA (formerly Klaus) to build & maintain custom QA scorecard(s) and review customer service interactions with ease.