Are you keeping an eye on customer satisfaction? Chances are, you’re familiar with CSAT, CES, and maybe even NPS.

But here’s the thing: while many companies measure these metrics, only about a third of support teams keep tabs on IQS, which is like the North Star for boosting the quality of customer service.

Relying solely on metrics like CSAT isn’t enough anymore. If you’re serious about delivering top-notch customer support, IQS should be your main focus.

What is IQS?

IQS (Internal Quality Score) is a customer support metric that shows how well your team is performing based on internal quality standards that you set yourself.

To track IQS, you have to review support conversations and score them against predefined categories.

Why measure Internal Quality Score?

External quality metrics like Customer Satisfaction Score, Net Promoter Score, or Customer Effort Score only tell you half of the story. They reflect how satisfied your customers are with what you do.

However, they say nothing about, for example, how well your agents followed the internal guidelines, or even if they provided a correct and full solution to the customer’s inquiry.

Leaving the customer service quality only for your customers to judge causes the following:

- Customers give feedback to the product and company, not just customer service. For example, customers tend to express their disappointment for declined feature requests in customer surveys, even if the case was actually handled nicely by the support rep.

- Customers don’t see the complex processes behind their inquiries. At times, customers are dissatisfied because your team was unable to meet customer expectations. But, they have no way of knowing how much time it takes to fix a bug or to build a completely new feature. Again, this might have little to do with how your agents interacted with the user.

- Customers don’t know your quality standards. They rate your interactions from their subjective purrrspective, based on what they think is right. Your quality standards might be higher than that — for example, when taking product knowledge into consideration.

For these reasons, it is essential to analyze your customer service interactions based on your internal quality standards.

How to measure your Internal Quality Score

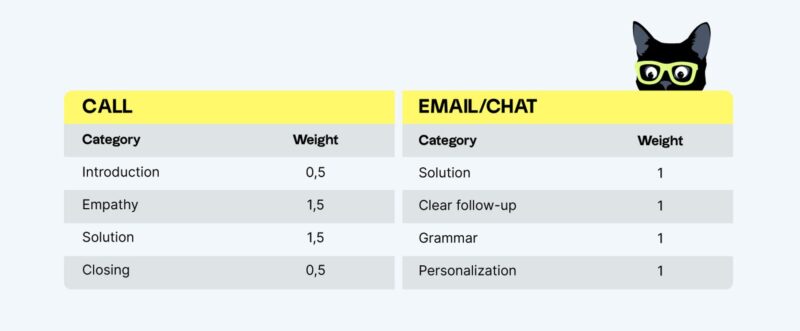

Internal Quality Score (IQS) is the outcome of conversation reviews, expressed as a percentage. Reviewers — peers, managers, or CS QA specialists — analyze customer interactions (such as emails, chats, and phone recordings) and rate the support team’s performance based on a QA scorecard that’s tailored to their quality standards.

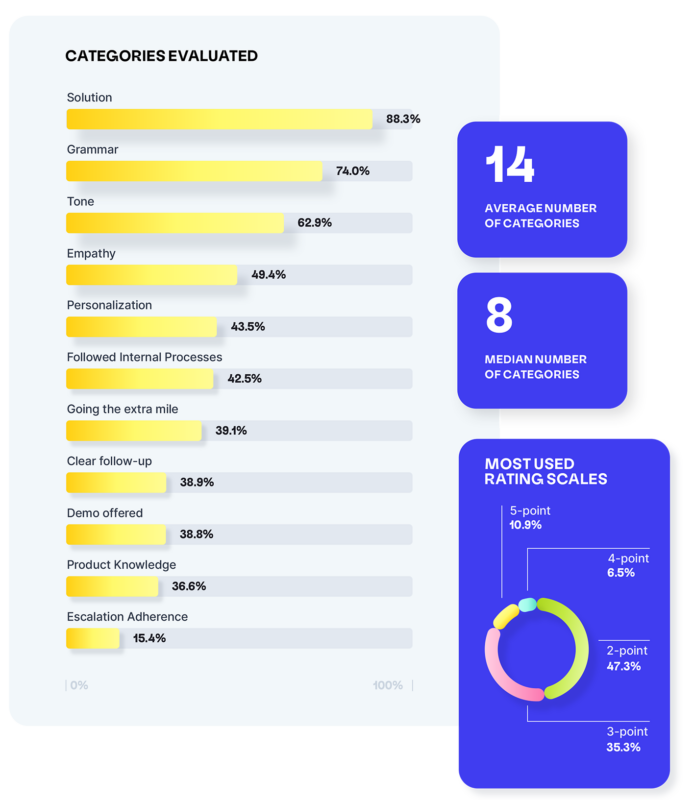

All businesses have their own understanding of what they want their customer-facing interactions to sound and feel like, and how to impress their particular customers. All of these aspects are represented in the scorecard as separate rating categories.

To calculate the IQS score for a single conversation, sum up all ratings and divide them by the maximum available score multiplied by the total number of categories.

(Sum of ratings / [Maximum available score x Number of categories]) x 100 = IQS (%)

Then, repeat the process with a representative sample of your tickets to calculate IQS for your entire support team.

Using quality assurance software for measuring Internal QA Score

Reviewing conversations and measuring IQS support scores manually might feel a little daunting, which is why increasingly more support teams switch to dedicated quality management software.

By enriching your help desk data and introducing advanced AI-powered functionalities into your QA program, you can easily calculate and monitor your IQS support metric over time and make data-driven decisions about how to improve customer service quality, provide better support, and redefine support goals.

Here’s what Zendesk QA (formerly Klaus) brings to the table:

- Sentiment Analysis for finding conversations where the customer displayed delight or frustration

- Spotlight for discovering conversations that are critical to review

- Conversation Insights and its powerful data-mining capabilities to make sense of your help desk data

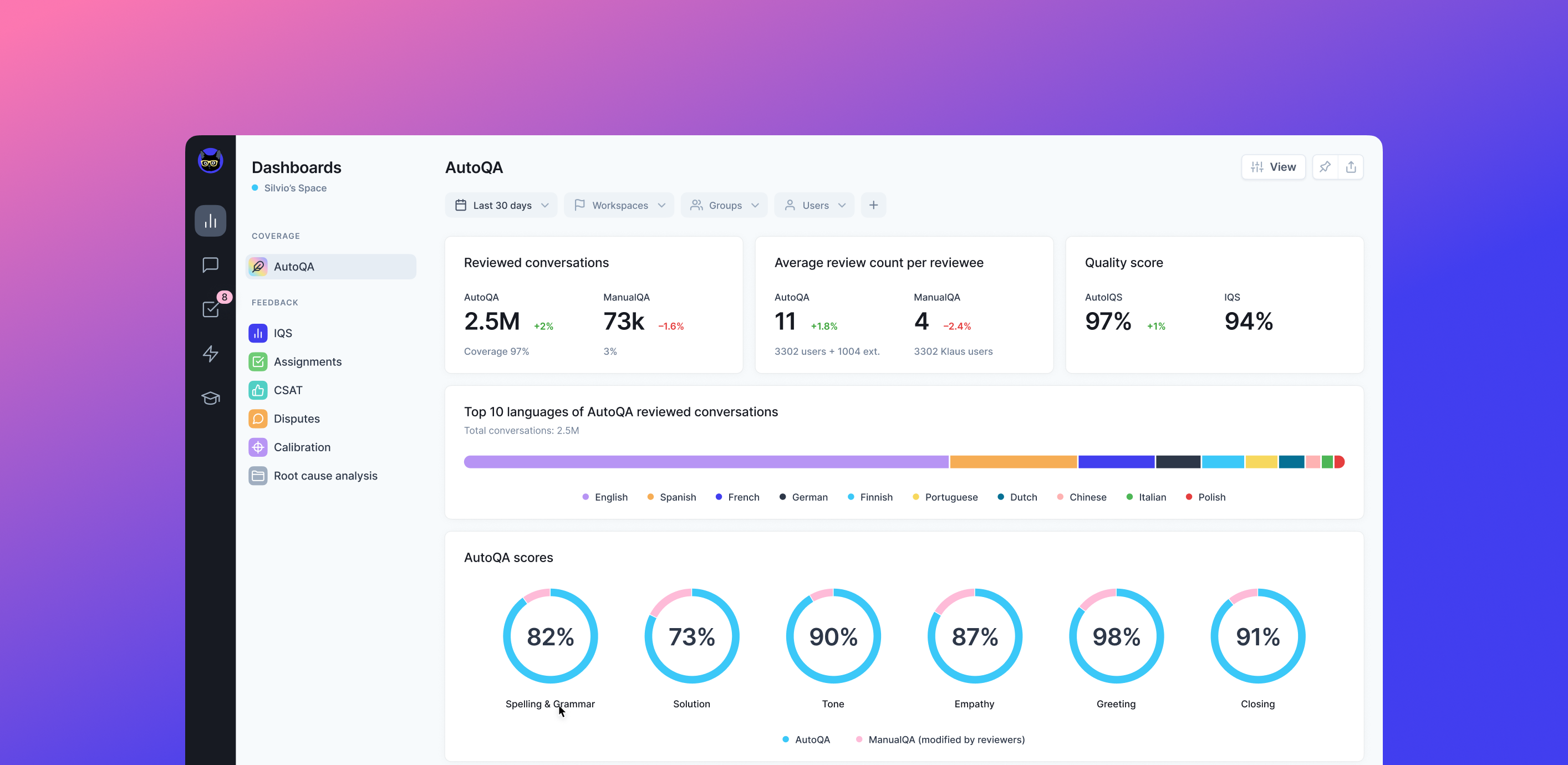

- AutoQA to standardize your QA processes and achieve 100% coverage by automatically scoring every agent and support interaction

Internal Quality Score IQS benchmark

No matter how you choose to calculate your Internal Quality Score — once you do, you can compare it to other teams.

According to the latest Customer Service Quality Benchmark Report, the IQS benchmark for 2023 is 88%.

What’s more, 48% of teams use conversation reviews to track IQS support scores over time. Are you?

How to improve IQS?

Successful customer service teams don’t just track IQS, they also aim to improve it. If that’s something you’re interested in as well, consider the following:

1. Pair customer service quality and customer satisfaction scores

Measuring both Customer Satisfaction Score (CSAT) and IQS will help you understand what impacts customer cat-isfaction in your company. If you notice low CSAT scores, internal reviews can help you get to the bottom of the issues.

In many cases, customer support reps are not the ones responsible for low scores — the quality of your products, feature roadmap, or shipping policy can also contribute to unsatisfied customers.

2. Hold regular feedback sessions with your support reps

To ensure your team is performing at their best, it’s important to provide regular feedback to your support reps. This will help identify areas for improvement and allow them to develop their professional skills.

44% of the surveyed teams use conversation review feedback in 1:1 meetings and coaching sessions with support reps. By using QA reviews as a starting point, managers can train and coach agents with precision — and track results with regular reviews over time.

3. Use AutoQA to have all your support interactions reviewed

As companies strive towards 100% coverage — the holy grail of quality assurance reviews — it is no surprise that achieving it solely on human powers continues to be a struggle.

Luckily, Zendesk’s AutoQA automatically scores support interactions across multiple categories and languages without requiring additional resources. Not only does it eliminate the issues related to manual conversation reviews, but also means:

- Handling huge ticket volumes in less time without missing hidden gems of information

- Ensuring all your agents and categories are automatically covered

- Improving efficiency and your team’s overall workload

But there’s more to AutoQA than speed and effectiveness. It provides a scalable solution, delivering increased accuracy and the ability to identify potential errors that may be missed by your human reviewers — ensuring that your support quality is always top-notch.

IQS support metric — limitations

As already mentioned, IQS is not the easiest support metric to track without dedicated tools.

On average, only 2% of support interactions are manually evaluated. Unfortunately, that’s not enough to have a complete overview of your support quality or make informed decisions about potential improvements.

Moreover, human reviewers might be biased (without even realizing it) or have trouble understanding the rating system. It’s important to make sure everyone is on the same page before evaluating anything, as swayed results can be detrimental to your business.

On top of bias, loss of focus or lapse in human judgment make it easy for errors to happen. Imagine reading through a long conversation thread over and over again, trying to catch spelling errors.

That’s precisely why relying on QA automation and holding regular calibration sessions to measure and continuously improve your Internal Quality Score is essential.

You definitely want to see context when, for example, emotions run high and the conversation has strayed from the original problem. A machine can tell you that this conversation went longer than average. But that could mean the agent did not control the conversation, or, on the contrary, that they managed to calm down an upset customer and bring them back onto a path to resolution.

Let the machine spot the customer service conversations that need human review, and then let a human reviewer give feedback that makes sense.

The time to measure your Internal Quality Score is meow

Almost every aspect of your business is subject to internal quality assessment and review. Whether it’s code reviews for software engineers or quality assurance for physical production or audits for financial records, most teams are tracking the caliber of their work.

So why should your customer support be any different?

Originally published in February 2022; last updated in October 2023.