When 79% of customer leaders agree that customers are smarter and more informed now than in the past, call centers need robust action plans to meet their expectations and improve the quality of phone support.

If you agree — welcome, you’ve come to the right place.

Whether you have a contact center quality assurance program that needs updating, you are looking to check in on call center quality assurance tips, or you are just starting out, these call center quality assurance guidelines are here to help.

First things first…

What is contact center quality assurance (QA)?

Contact center quality assurance (QA) is the practice of reviewing and analyzing customer conversations to make sure they meet internal quality standards.

By rating performance and processes based on different categories, you can provide data-driven feedback and determine paths to improvement. It’s an ongoing process that helps you keep up with rising customer expectations and call center quality improvements.

How to start with quality assurance in a call center?

No matter if you provide in-house phone support or use BPO (Business Process Outsourcing), the person who picks up the phone when a customer calls creates the first impression of your company.

Call center quality shows how well the team meets and exceeds customer expectations, industry standards, and customer service goals. Achieving high quality in call center involves a few things, including customer satisfaction, timeliness and compliance, call monitoring and evaluation, as well as agent coaching. A robust call center quality program, in other words.

Follow the call center quality assurance guidelines below to create your own contact center QA framework and make sure that every customer interaction is a positive one.

Call center quality assurance — best practices

- Have a vision for your customer interactions

- Define your contact center quality assurance criteria

- Set up your call review process

- Make call monitoring systematic

- Use your review feedback

- Consider implementing a call center quality assurance tool

1. Have a vision for your customer interactions

Before you rush into analyzing your call center interactions, you should have a clear vision of what you want your customer service to look like.

Call centers are not a “one size fits all” type of service. Your customer service strategy and call center quality assurance scores should align with your company’s vision and goals. So, when you’re creating a contact center quality assurance review process, start by defining:

The purpose

You likely know your value proposition, and it’s likely to fit into the sentence “We offer phone support for…”.

Whatever your unique selling point is, you want whatever type of customer service you deliver to be of high quality. And you want to train and retain agents who deliver excellent support that aligns with this purpose.

The target audience

Options range from helping all users equally, including trials and leads, to focusing specifically on paying or premium users. It’s mostly a business decision, but your branding team might also have a say in this, as all your customer-facing activities have an impact on your company image.

The approach

You might prefer your customer service team to give quick, short, and to-the-point answers, and that’s alright. The times are changing, though. “Easy” answers can rather be found in knowledge bases or delivered by chatbots, while customer support reps are expected to go the extra mile and drive support-driven growth.

That being said, your call center vision might range from “We offer phone support to everyone, with a sharp focus on providing quick help” to “Our contact center works with our premium customers by solving their issues, driving product engagement and upsells.”

The route you take determines your call center quality assurance process. Ultimately, evidence-based feedback should help you plan onboarding and training, influence coaching sessions, and drive your key performance indicators.

2. Define your contact center quality assurance criteria

Goal-setting tends to be part of the whole call center quality assurance framework that people often rush through. However, it plays a crucial role in aligning your call center QA reviews with your support vision, so make sure you don’t skip this bit.

This step consists of four components to help you build a scorecard that reflects how your team is performing based on your idea of excellent service:

Setting goals

Go back to your support vision and break it down into 2-4 specific customer service goals. For example, are you looking for ways to further customer satisfaction, boost your CSAT, provide faster solutions, or improve your agents’ product knowledge?

Goals will direct you to the aspects of the conversations that you need to analyze, help you build team cohesion, and pivot quickly if needed.

Choosing rating categories that reflect your goals

Now, it’s time to create your own call center QA scorecard — in other words, an evaluation form that will help you review customer conversations as objectively as possible.

Match each of your goals with at least one rating category on your call center scorecard — these can be anything from Solution, Tone, Empathy, Following internal processes, or Going the extra mile.

No matter which ones you choose, make sure all your goals are represented in your contact center QA reviews but try not to overdo it. 1-2 rating categories per target should be enough.

✨ The case for general rating categories: Call center QA reviews should be (relatively) quick and easy for the reviewer and reviewee.

✨ The case for granular rating categories: Each conversation needs to be reviewed in accordance with your universal quality standards.

For example, if you are polishing agents’ product knowledge, include “provided accurate product information” as an assessment criterion. Those looking for ways to make their calls feel warmer and friendlier should include a category for “empathy”, and maybe also “appropriate opening and closing lines”.

Interestingly, the average number of rating categories on a scorecard is 14 (although the median is a far more reasonable 8).

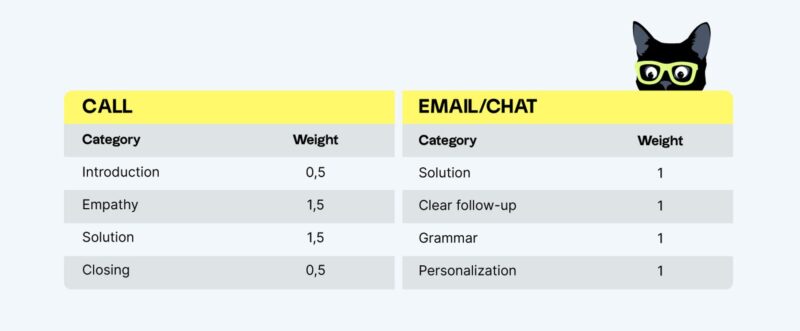

Prioritizing rating categories (if needed)

Turn to your vision and goals to understand which of your rating categories matter the most to you. Give those categories more weight in your internal assessments.

For example, sharing correct product information might be more critical than the agent’s vocabulary. You can also make certain categories critical — passing them is a prerequisite for an overall passing score.

Agreeing upon a rating scale

Implement a scoring system that is easily understandable to all reviewers, so that they would assess tickets in the same manner. This can be as simple as a 2-point scale so that reviewers can only give a thumbs up or a thumbs down in each rating category. Or it could be as long as a score out of 10.

According to Customer Service Quality Benchmark Report 2023, many teams use a binary rating scale to rate categories on a scorecard, although rating scales can be far more granular:

- 2-point rating scale is used by 47.3% of surveyed customer support professionals

- 3-point rating scale — 35.3%

- 5-point rating scale — 10.9%

- 4-point rating scale — 6.5%

Your contact center scorecard is the foundation of your call center QA process, so it’s worthwhile to dedicate time to creating a proper one. If you skip the vision and goal-setting parts, you might end up with assessment criteria that are irrelevant to your call center.

Instead of overwhelming reviews with dozens of questions, concentrate on the aspects of your team’s interactions that matter most to you.

If you need any help once you’re at it, try downloading the customer service scorecard template:

3. Set up your call review process

Before you get going with call reviews, you need to give this process a solid structure. This will make your call center QA consistent, transparent, and understandable for everyone.

Though some people see call center QA reviews as a time-consuming and burdensome procedure, it doesn’t have to be like that. Keep efficiency in mind when designing your call reviews by finding answers to the following quality assurance questions:

Who should conduct your call center quality assurance reviews?

Choose between managers, contact center QA specialists (or quality assurance analysts), peers, and self-reviews. They all have their pros and cons, so see which one works best for you:

- Managers can review a limited number of cases. It’s important for them to understand the strengths and weaknesses within their team and the processes in a broader context, though.

- QA specialists help make measuring and improving call center quality a top priority company-wide. Having a specialist prevents this task from falling down on the to-do list of other responsibilities.

- Peer call center QA reviews are the most time-efficient form of internal assessment that push agents to learn from each other. As long as you have team calibration sessions, this is an excellent format to promote shared standards.

- Self-reviews help agents understand their areas of improvement, which is the key to agents’ professional growth. It is rare to adopt this as a solo format and is perfect to introduce it for call center performance reviews instead.

How many calls should you review?

Unfortunately, the vague answer to this question is ‘it depends’. The question you should be answering instead is which calls should we select for call center QA reviews?

For example, you may want to focus on one or several of the below:

- Complex interactions, where there is a more lengthy back-and-forth and no easy solution,

- New agent conversations, as part of their onboarding program,

- Conversations where the customer gave a bad Customer Satisfaction Score (CSAT).

If you are set on a numerical target, it is easier to express your goal as a percentage of the total volume. This keeps your contact center QA reviews statistically relevant as your company grows.

💡 Call center quality assurance tips from Ahmad Baydoun: Start by analyzing how many people you need to be able to review a reasonable amount of your customer calls. Make sure your review sample is big enough to be representative of the quality trends in your team. Then calculate how many people it will take to listen to this 5-10% of all call recordings and provide meaningful feedback to all agents.

4. Make call monitoring systematic

As you start doing call center QA reviews, keep in mind that consistency is your key to a successful call center QA framework. To get a picture of how your phone support is performing and to track it over time, you should approach it in a well-organized manner.

For example, you may want to set a goal to ensure that every agent conducts several peer reviews per week, and all managers conduct several reviews per agent per month. These basic rules will help you stay on track:

Listening to the entire call

Though sometimes you might be tempted to skip the small talk and jump to the next question on your scorecard, you should always pay attention to the full conversation. Otherwise, you won’t get an accurate notion of the agents’ tone and style. Plus, you might miss some crucial information buried in the small talk.

Giving incorrect ratings will do an injustice to the reviewed support rep and diminish the entire contact center QA process.

Staying consistent

Systematic call reviews help with quality control and knowing of what is happening in your call center. If you notice a decrease in any of the call center metrics that you track, internal call center evaluations will help you identify the areas that your customer service team needs to work on.

Contact center quality assurance review is not a one-time project. It’s an ongoing process that helps you boost and maintain the quality of your support team. Your products and teams are likely to change over time, so you need to make sure that both your new hires and old-timers are meeting your call center quality standards alike.

Investing time into quality calibrations

No matter how well you’ve trained your team, there’s always a chance that different reviewers score the same conversation differently. That’s just human.

Quality calibrations will help you ensure consistency throughout all call center QA reviews. There are different methods and setups for QA calibrations, but the goal of them is always the same: to make sure all reviewers evaluate calls the same way. That’s how you can make sure that all agents receive the same level of feedback regardless of which reviewer scored their performance.

💡 Call center quality assurance best practices from Ahmad Baydoun: One of the most common things that contact centers miss in their quality programs is doing calibration sessions with their customers. Having company representatives in quality calibrations helps to align your contact center quality assurance program with your customer’s expectations. This makes sure you don’t drift too far away from their vision.

Start by benchmarking your quality goals with your customers and then invite them to occasionally join your calibration sessions. You’ll get a better understanding of how they would evaluate certain situations, and, more importantly, why they think this way. They know their business better, so it’s up to them to decide what’s the right way of handling customer calls.

5. Use your review feedback

Here comes one of the most important call center quality assurance tips: Everything you learn from the customer interactions should be used in a meaningful way — as feedback to agents, or input for other teams.

Before diving into your team’s call recordings, plan out how you will use the information that you’re gathering. Don’t think of call center quality assurance as a way to pinpoint mistakes your agents are making; think of it as an opportunity to help your team grow through feedback. Utilize your data to its maximum, through call center training, trend spotting, and accurate reporting. Other uses:

Input for 1:1 meetings

Use call quality monitoring as the basis for giving feedback to your call center agents. Find their areas of growth and set actionable and time-bound goals, then see how your team progresses from call to call.

Food for thought and self-reflection

Analyzing their own performance against internal call center quality standards helps agents improve their interactions. According to a study published in the Journal of Business Research, self-assessments boost the quality of customer service and can increase your NPS by 5%.

💡 Quality assurance in call center — tips from Ahmad Baydoun: Use QA results and IQS scores to highlight the outliers in the team and track their performance over time. Act accordingly when you see no positive changes in their performance. Unfortunately, outliers have to be replaced. Only if the performance doesn’t improve after a series of coaching, one-on-one sessions, and re-trainings, look for an exit phase. If you hire the right people, train them well, and coach them through calibrations and regular feedback, the chances of having outliers in the team are very low.

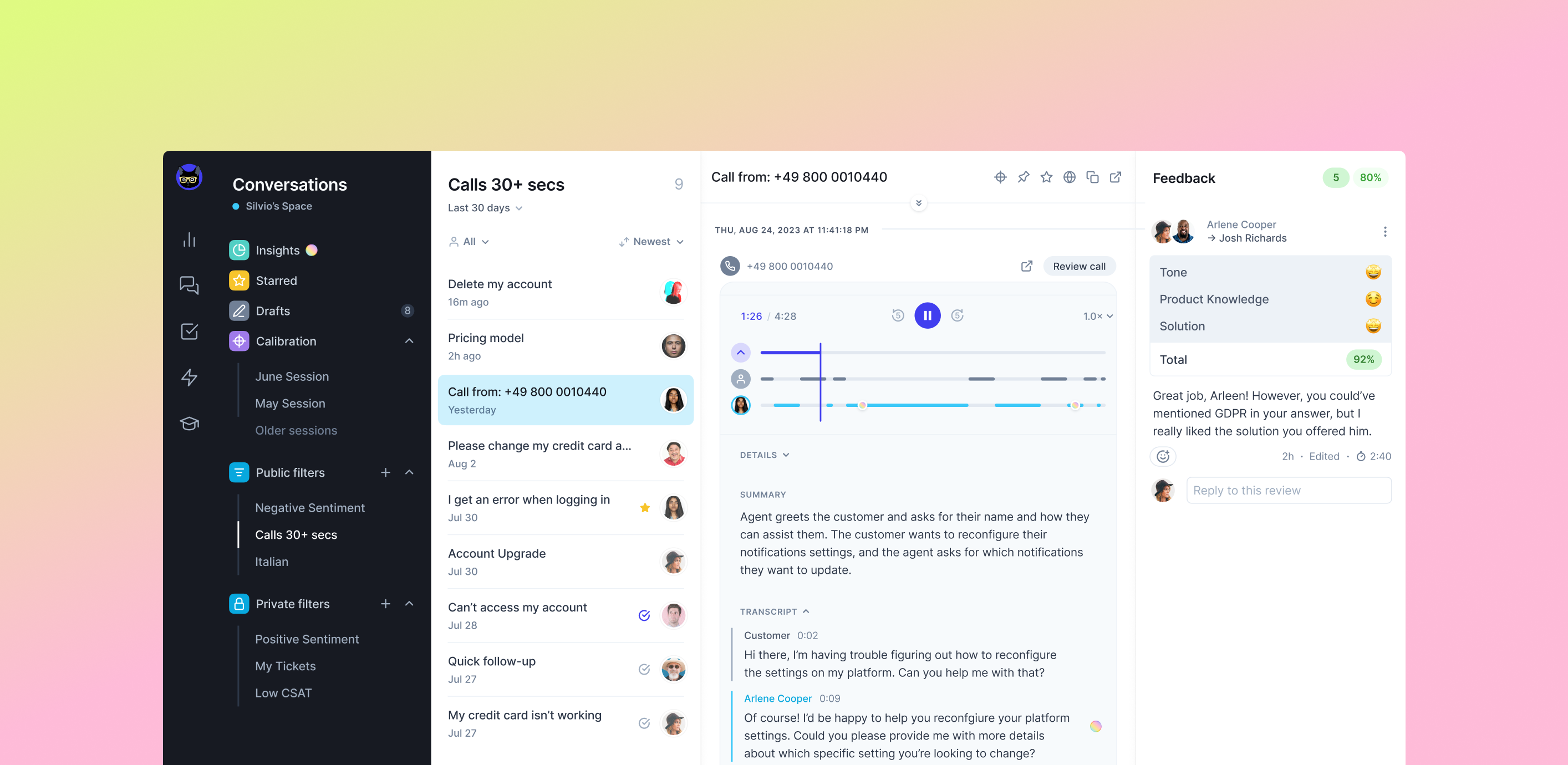

6. Consider implementing call center quality assurance software

Having a proper setup for your call center QA reviews is crucial because it helps you keep track of your team’s performance over time and provide consistent feedback to your agents. The more convenient, flexible, and transparent the procedure is for everybody, the more likely they are to participate in it.

Although companies often start by using spreadsheets to organize their internal feedback, this quickly becomes difficult to scale and difficult to manage. Contact center quality assurance solutions reduce the time spent on call center QA reviews and streamline the quality control process with VoiceQA, automatic call summaries and speech-to-text capabilities — among other features.

Help your QA team conduct quality assurance reviews in the call center efficiently by giving them the right tools and frameworks to work with. You’ll save your team heaps of time and tedious work they’d otherwise spend on managing the review procedures manually.

Automated quality assurance for call centers

Manual reviews still play a crucial role in the call center quality assurance process. The “human element” is crucial for interpreting complex situations, offering detailed feedback, and realizing the full potential of call center quality assurance.

However, the most challenging aspect of the feedback loop also stems from human behavior:

- On average, only 2% of conversations are reviewed manually,

- Manual reviews are time-consuming and don’t easily scale with the growing volume of customer calls, potentially delaying feedback and impeding service improvements,

- Human error is unavoidable and can result in flawed assessments and unreliable data.

Automated call center reviews can help your QA team analyze a large volume of interactions – and do it fast. In fact, automated call center quality assurance can amplify your conversation review capabilities by up to 50 times, offering 100% coverage. This allows you to gauge the overall customer sentiment and team performance without listening to every call, irrespective of the volume of interactions.

Manually sifting through customer calls to identify issues often feels like using a metal detector on a beach in hopes of finding treasure. Automated quality assurance for call center acts like a treasure map, pointing you directly to the problem areas. Consider this when picking the call center QA software for your needs.

Ready to launch your contact center quality assurance program?

The quality of your contact center is highly dependent on the success of your quality assurance program.

In fact, setting up a dedicated team for the contact center quality assurance framework is necessary for all call centers that want to offer their customers personalized and high-quality support services. Regular call reviews and agent feedback iron out inconsistencies in your support team’s performance.

Follow the call center quality assurance guidelines above and you’ll be ready to launch your QA program in no time.

Originally published in December 2021; last updated in October 2023.