Jump to the customer service scorecard template right away →

Many customer support leaders find it challenging to measure and improve the quality of support, with 30% considering it a significant issue.

To make matters worse, the Customer Satisfaction Score (CSAT) response rates are low, standing at only 19% for chat and a mere 5% for email and phone support. This means relying on customer feedback alone isn’t a reliable way to gauge your team’s performance.

Now, introducing the customer service scorecard (Cue soft applause.)

What is a customer service QA scorecard?

A customer service QA scorecard is an evaluation form that helps you review customer conversations as objectively as possible. It speeds up the review process while making feedback specific and measurable.

When done properly, that is. The trick is to build a QA scorecard in a way that best supports your customer service goals, quality assurance standards, and customer expectations.

How to create a customer service scorecard, then?

Essentially, you have two options. The easier one is to download a customer service scorecard template [Excel sheet] and take your customer service quality assurance process from there:

✨ Download the customer service QA scorecard — Excel template

After helping hundreds of support teams in building the perfect scorecard, we’ve created the Ultimate Customer Service Scorecard Excel Template for Quality Assurance. It’s the easiest and quickest way to get going with support quality assurance if you don’t have a QA process in place or are looking for a way to revamp your existing customer service QA scorecard.

Our customer service QA scorecard template in Excel comes with sample data that’ll help you understand how it works. (Yes, it’s that easy.)

Now, customer service QA scorecard examples and Excel templates are handy — but in order for them to be effective, you should always tailor them to your quality standards. If possible, try building the customer service scorecard yourself.

Start by figuring out what “quality” means for your business, then verify your assumptions, and set specific goals for your team. Ideally, the contents of your customer service scorecard should reflect those.

If you need more guidance on creating your own customer service scorecard, keep reading.

What should be included in a customer service scorecard?

1. Reviewers and support reps

List all the participants of the quality management process in the document to make the conversation review process clear and transparent.

2. Review period

Use a new document for every week, month, or quarter, depending on your support volume. Keep the review lists short and easy to use.

3. Review goals

Define the proportion (%) of your conversation volume that you want to review every month. This will help you scale your support quality assurance program as your team grows.

4. Rating categories

Now comes the fun part: For every conversation you review, you’re going to check their quality based on the rating categories, over and over again.

As you can tell, choosing the categories is a pretty important step, and should reflect your company’s support goals and values.

You can add as many categories as needed. To focus on specific aspects of support quality, you can rate the tone, format, rapport with the customer, product knowledge, and much more.

But, there’s a catch: Too many categories can lead to decision fatigue for your reviewers, whereas using too few might not provide you with enough insights into your support operations & agent performance.

Technically, there’s such a thing as too many cat(egorie)s, but we think every business can find a sweet spot between 3 and 7 of those. In fact, around 89% of our customers use between 2 and 4 rating categories for their internal conversation reviews.

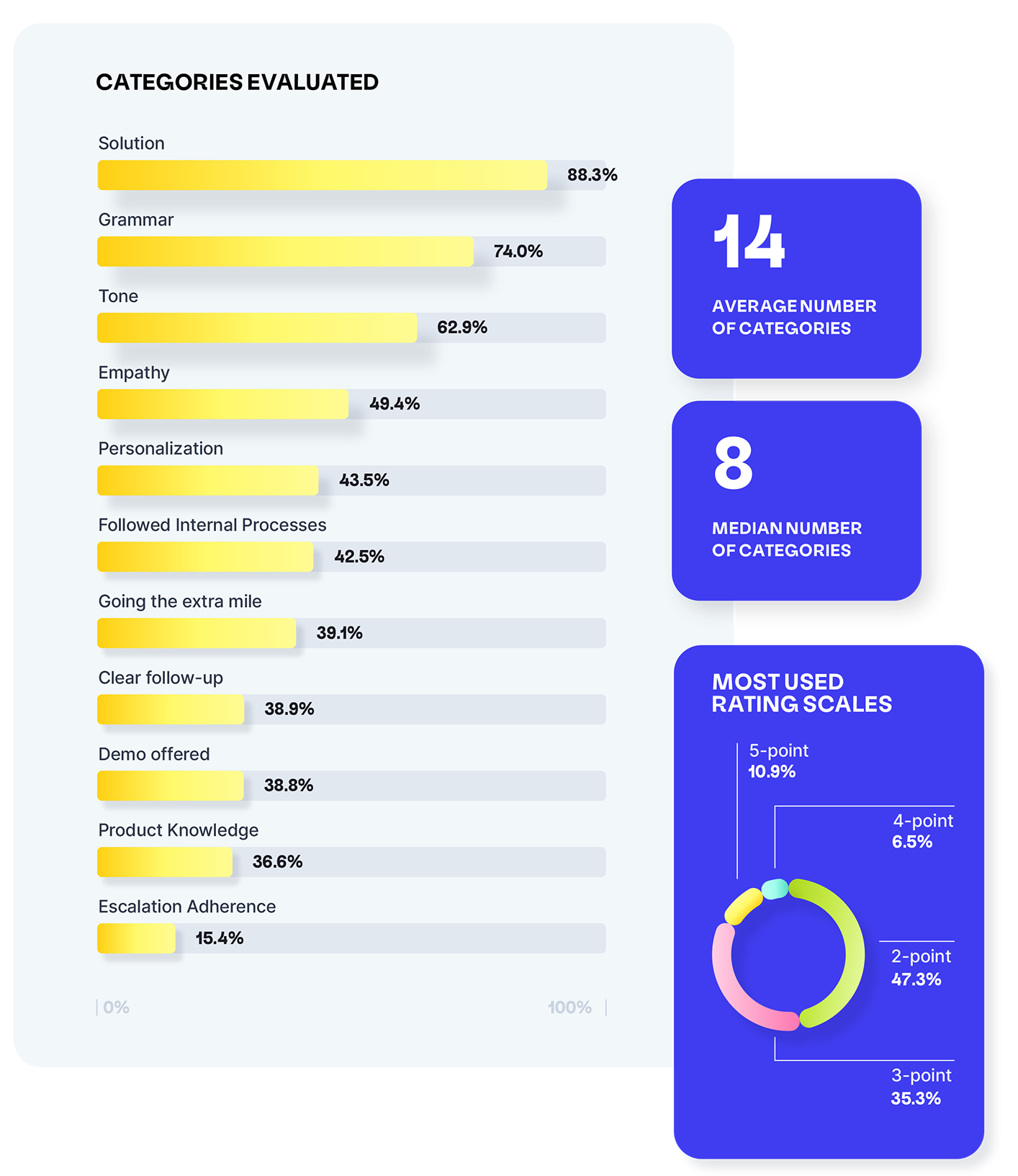

Looking for some inspiration? According to Customer Service Quality Benchmark Report 2023, the most popular categories used by customer service professionals are:

- Solution

- Grammar

- Tone

- Empathy

- Personalization

- Following internal processes

- Going the extra mile

Interestingly the average number of rating categories on a scorecard is 14 (although the median is a far more reasonable 8).

5. Category weights

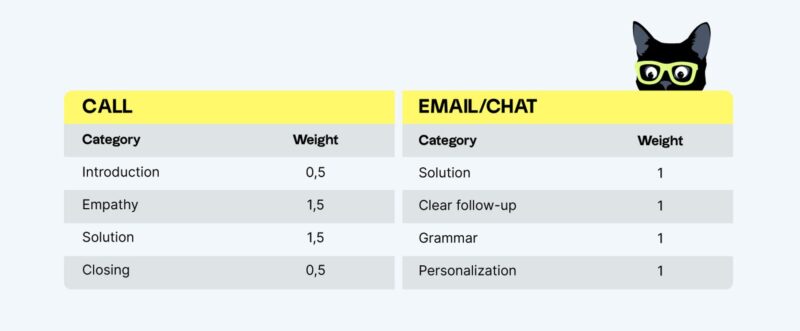

Weights give more importance to the rating categories that matter most to you. You can give equal weight to all of your categories, or assign different weights if some are more important than others. For example, many teams believe that providing the right solution to your customers is more crucial than following all grammar rules.

Then, the category that’s also important for us is “Internal note”. If someone reaches out to another department, their note should be complete — including the who, what, where, when, why, and what they expect the colleague to do in order to help. If they have to call or message for clarification, then it shows that the note was incomplete and we’re wasting time.

Apart from your team’s support goals and values, the exact categories and weights will also depend on the support stream. When talking on a phone, Tone, Empathy, and Closing are more important than Grammar, Follow-up, or Personalization — which matter a lot when responding to an email or a chat.

6. Rating scales

In short, your rating scales define how detailed your review results are going to be. But, they also affect the usability of your customer service scorecard. The larger the rating scales become, the more difficult it is to choose how to score customer interactions.

Many teams use a binary rating scale to rate categories on a customer service scorecard, although rating scales can be far more granular:

- 2-point rating scale is used by 47.3% of surveyed customer support professionals

- 3-point rating scale — 35.3%

- 5-point rating scale — 10.9%

- 4-point rating scale — 6.5%

Read more about the pros and cons of different scoring systems →

7. Feedback

Last but not least — constructive feedback. If there’s anything to improve or comment on, make sure to include the agent feedback on the customer service scorecard.

For better results, combine conversation reviews with regular, well-structured feedback sessions to help everyone in your team understand their QA scores and grow their skills.

By including all this information in your customer service scorecard, you can make sure everybody works towards the same goals. After all, customer service is a team game, so use your customer service QA scorecard as your game board.

Agorapulse involved the entire team in the process of building their customer service scorecard and QA program. During their annual company retreat, they brainstormed together to come up with a scorecard that everybody understood and agreed with. Once they’d set up their quality assurance program as a team effort, it was easy to get everybody engaged in conversation reviews.

Then, reviewing two conversations per agent on a daily basis has become an easy habit for managers since they started using the dedicated quality management solution. Read the full case study →

This brings us to the next point…

How to implement a customer service QA scorecard?

There’s one principle that we can’t emphasize enough — customer service scorecards and conversation reviews only work if you really do them.

Again, there are two options to bring your customer service QA scorecard to life:

Let’s (not?) talk about spreadsheets

Excel or Google Sheets usually work for small teams with low conversation volumes. The issue is, with large support teams, they can get messy really fast.

The more people who access the document and the more conversations you review, the more difficult it is to maintain conversation review spreadsheets.

Use QA software instead

We might be a little biased, but we recommend using customer service QA solutions like Klaus to run conversation reviews, build your own customer service QA scorecards, and more.

It takes the emphasis away from the scoring and focuses on what’s more important — using the insights to improve customer service and drive revenue.

By default, your Klaus account comes with three rating categories:

- Solution;

- Empathy/Tone;

- Product knowledge.

However, you can add and customize to your heart’s content.

Take a meowment to consider your reviewers, how many reviews they undertake, and how long you want your support reps to spend on considering their feedback. In other words, don’t turn an informative exercise into one that triggers decision fatigue.

With Klaus, you can balance “traditional” customer service QA scorecards with automated ones. Klaus automatically calculates QA scores based on the following categories:

- Solution

- Tone

- Empathy

- Spelling and grammar

- Greeting

- Closing

Our goal is to continually expand our repertoire of automatic categories to empower your customer support teams further.

Here’s how Klaus helps you manage support quality

- Connecting to your help desk software and automatically pulling conversations for review (be it chats, emails, or calls);

- Allowing you to create custom QA scorecards with unlimited rating categories, so that you don’t have to worry about building formulas to track your IQS;

- Using AI to make sense of your support data, pinpoint problematic cases, and highlight what needs your attention;

- Automatically calculating QA scores — Klaus can score every agent and customer service interaction across multiple categories and languages, helping you can achieve 100% coverage

- Engaging your support reps through continuous feedback & active learning;

- Creating meaningful reports that help you track every agent’s performance over time and share the results of your quality program with the whole company.

The customer service scorecard-building process explained

Whether you decide to switch to a quality management solution or give the customer service scorecard Excel template a try, we hope you’ll start reviewing customer service interactions in no time.

Once you’re at it, keep your QA process short and simple — there’s no point in spending time on customer service scorecards if you’re not going to use them. Avoid unnecessary criteria and complex rating scales that make scoring difficult and prevent your customer support team from consistently doing reviews.

Instead of agonizing over 10 different rating categories, invest your time into leaving meaningful comments and following up on them in your 1:1s.

Keep in mind that customer service QA scorecards don’t have to be set in stone. Tweak your rating categories, weights, or scales if needed, but remember to always inform your support team about any changes that you make. Even the smallest adjustments are likely to impact customer service operations.

Now, go ahead and do a test review! If you need any help with your QA program, quality management platform Klaus is here to help — every step of the way.

By leveraging the powers of Klaus AutoQA, you can automatically score every customer interaction across multiple categories and languages, achieving 100% coverage without any additional resources.

Originally published in August 2021, last updated in January 2024.